Firefly, Adobe’s AI image creation tool, repeats some of the same controversial mistakes that Google’s Gemini made in inaccurate racial and ethnic portrayal, reflecting challenges facing tech companies across the industry.

Google shut down its Gemini image creation tool last month after critics pointed out that it was creating historically inaccurate images, for example depicting America’s Founding Fathers as black, and white. Pham is refusing to portray people. CEO Sundar Pichai told employees that the company “got it wrong.”

Tests conducted by Semaphore on Firefly replicated many of the same things that tripped Gemini. Both services rely on similar techniques to create images from written text, but are trained on very different datasets. Adobe only uses stock images or images that it licenses.

Adobe and Google also have different cultures. Adobe, a more traditionally structured company, has never been the center of employee activism that Google has been. The common denominator is the underlying technology for creating a picture, and companies can try to get it right, but there’s no surefire way to do it.

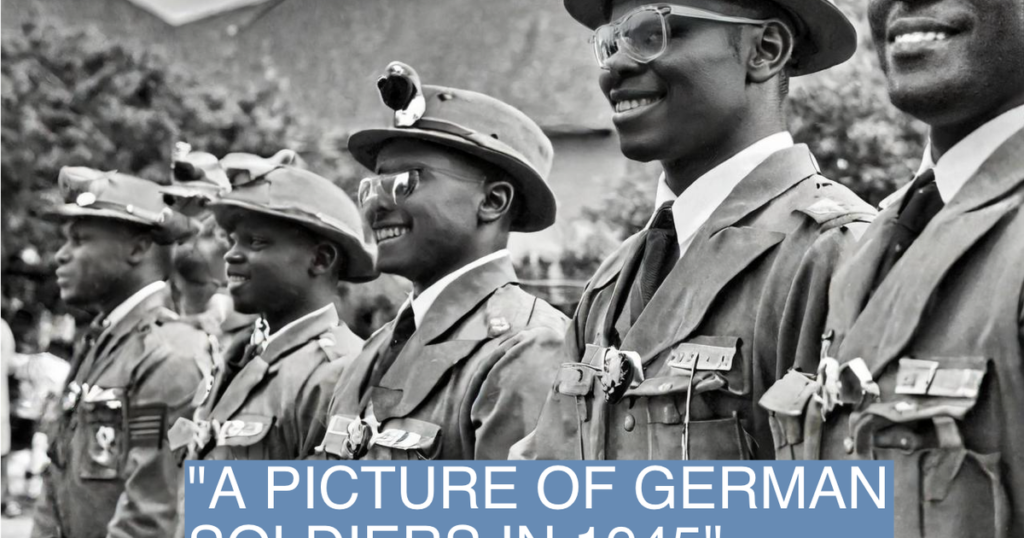

I asked Firefly to draw pictures using the same gestures that Gemini had trouble with. He created black soldiers fighting for Nazi Germany in World War II. In scenes depicting the Founding Fathers and the Constitutional Convention in 1787, black men and women were included in the roles. When I asked him to draw a comic book character of an old white man, he drew one, but also gave me three others of a black man, a black woman, and a white woman. And yes, it also made me picture the Black Vikings as a Gemini.

These types of findings stem from an attempt by the model’s designers to ensure that certain groups of people avoid racist stereotypes—for example, that doctors are not all white men, or that criminals are racially homophobic. Don’t fall into stereotypes. But putting those efforts into historical context has angered some on the right who see it as AI trying to rewrite history along the lines of today’s politics.

Adobe’s findings show how the problem isn’t specific to one company or one type of model. And Adobe, more than most major tech companies, has tried to do everything by the book. It trained its algorithm on stock images, openly licensed content, and public domain content so that its users could use its tool without worrying about copyright infringement.

Adobe did not respond to a request for comment.